This article details the technical aspects of the higher-level blog post on accessing your data lake using off-the-shelf tools like Microsoft Excel, PowerBI or OpenOffice/LibreOffice products.

Since I don’t have an MS Excel license (and it seems like my Office 365 license does not allow me to do this), I am simply putting links on how to do this on Microsoft Excel in the reference section below.

The rest of this article will focus on doing this with LibreOffice Calc. You’re a technical bunch, so extrapolating from this to something that works in your environment should not be a massive leap.

Let’s get started.

Things you’ll need:

- The Simba Driver for 64-bit Linux of your choice available here or here

- LibreOffice 6.4.6

- Data in S3 in a format that you can easily understand (perhaps CSV data)

- Access to AWS Athena on the AWS Console

- An AWS Access Key and an AWS Secret Access Key

Nice-to-have’s:

- Access to the CloudTrail logs to see what’s going on when you do queries

- A table in Athena that contains Parquet data

Step 1: Installation

Install the Simba drivers on your Linux host (if you’re using Windows, follow the Windows instructions). This can be a bit of a pain because it assumes a bunch of things. I found for example, that I needed the ODBC package unixodbc which contains the isql and iusql CLI tools for talking to an ODBC SQL data source.

In the Simba install instructions (bundled with the driver of course), they make a distinction between installing for iODBC and unixODBC. In my experience, I found the only method to tell them apart was by the version (Version: 2.3.6-0.1build1) of the driver (which told me I was installing the unixODBC drivers) and so I could choose the appropriate options.

I haven’t done this on Mac, so certainly YMMV.

Finally, I’m on Ubuntu (a Debian based distribution, and the Simba driver is an RPM package), so you need to use Alien to convert it to a .deb to do the installation. Not a train-smash, but good to know.

Step 2: Configuration

Now that they’re installed it’s time to configure things. There are two routes to using these:

- either using a data source name (DSN) or

- using a DSN-less configuration. For ease of use, I chose the DSN route.

Essentially this means we describe a name for the connection and use that name when querying.

Choosing to go the other route (DSN-less), means having to use the Athena VPC endpoints. Certainly if there’s sufficient interest in this, we could revise this post to use DSN-less settings.

There are three files:

-

/opt/simba/athenaodbc/Setup/odbc.iniThe main configuration file that specifies the AWS credentials, the DSN and other important things.

-

/opt/simba/athenaodbc/Setup/odbcinst.iniA file the specifies the driver version and the name of the driver.

-

/opt/simba/athenaodbc/lib/64/simba.athenaodbc.iniConfiguration for logging of the Simba driver. It will, for example, create a file called simbaathenaodbcdriver.log in the PATH you specify.

In the Simba documentation, the install path is /usr/local/odbc while on Ubuntu it’s installed in /opt/simba/athenaodbc/ by default.

Now probably the easiest thing to do is to copy /opt/simba/athenaodbc/Setup/odbc.ini to your home directory and rename is as .odbc.ini (note the leading period). Edit the settings in this file and you’re good to go.

I’ve compressed my configs below, but there are other settings you can configure:

[ODBC]

Trace=yes

[ODBC Data Sources]

MyAthena=Simba Athena ODBC Driver 64-bit

[MyAthena]

Description=Simba Athena ODBC Driver (64-bit) DSN

Driver=/opt/simba/athenaodbc/lib/64/libathenaodbc_sb64.so

AwsRegion=eu-west-1

Schema=homeautomation

S3OutputLocation=s3://myS3-big-data-bucket/athena-queries/

AuthenticationType=IAM Credentials

UID=AKIA<....>

PWD=gEc<....>xkU28v

Things you may wonder about:

MyAthena in the configuration above is the DSN I’ll be using in my connections

UID is your AWS AccessKey

PWD is your AWS Secret AccessKey

AuthenticationType allows us to use this simple auth. If you’re using Microsoft Active Directory, the Simba driver allows authentication through federated credentials from AD. Other options are also possible.

Now we have this in place, it’s time to test our connection.

To do this one can use the isql client supplied by the unixodbc package. Simply start it as follows:

$ isql MyAthena

If everything worked, then you should see Connected!

Typing help electricmeter will initiate a query to Athena.

+---------------------------------------+

| Connected! |

| |

| sql-statement |

| help [tablename] |

| quit |

| |

+---------------------------------------+

SQL> help electricmeter

| TABLE_QUALIFIER | TABLE_OWNER | TABLE_NAME | COLUMN_NAME | DATA_TYPE | TYPE_NAME | PRECISION |LENGTH | SCALE | RADIX | NULLABLE | REMARKS | COLUMN_DEF | SQL_DATA_TYPE | SQL_DATETIME_SUB | CHAR_OCTET_LENGTH | SEQ_IN_INDEX | IS_NULLABLE | USER_DATA_TYPE |

| AwsDataCatalog | homeautomation | electricmeter | date | 12 | string | 255 | 255 | | | 1 | | | 12 | | 255 | 1 | YES | 0 |

| AwsDataCatalog | homeautomation | electricmeter | reading | 7 | float | 24 | 4 | | 2 | 1 | | | 7 | | | 2 | YES | 0 |

SQLRowCount returns -1

2 rows fetched

SQL>

Sure enough, two rows describing my dataset - one being the date of the electrical reading and the other being the actual reading.

Step 3: Connecting Athena to LibreOffice … through LibreOffice Base

So now the only thing left to do is to get LibreOffice Calc to see this table. To do this, we need to create a new database connection in LibreOffice Base. Simple enough really. Just fire up LibreOffice Base

$ soffice --base

Connection settings outlined in the images below:

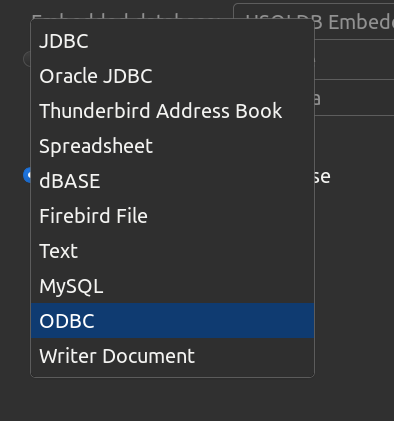

(1) Options for which driver to select

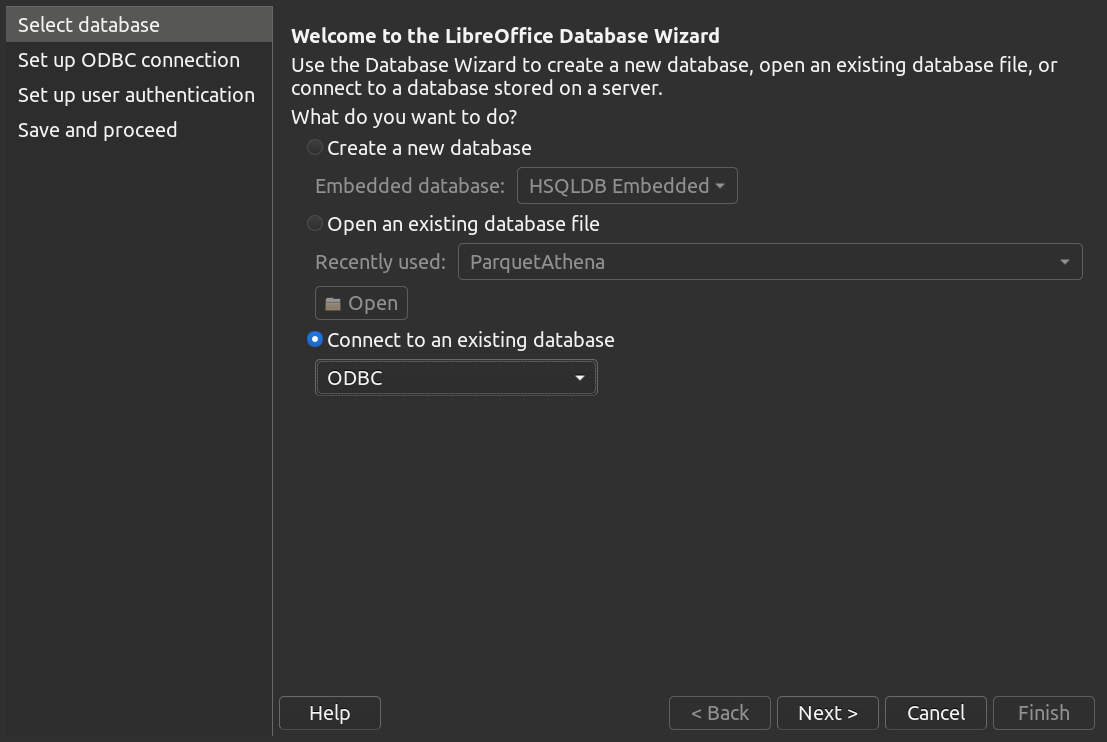

(2) Register a new connection in Base

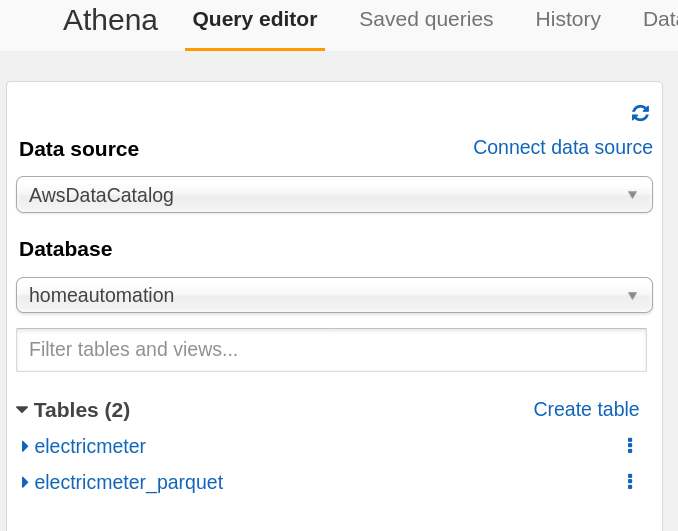

(3) The tables in Athena

(4) Now name the connection and save it

It might be helpful too to hit the “Test Connection” button to make sure it all works

Registering a new DB connection is done! Now it’s time to use this thing.

NOTE: All the steps above only need to be done the first time. After the connection is made, all [new] tables created in Athena will be visible to this connection.

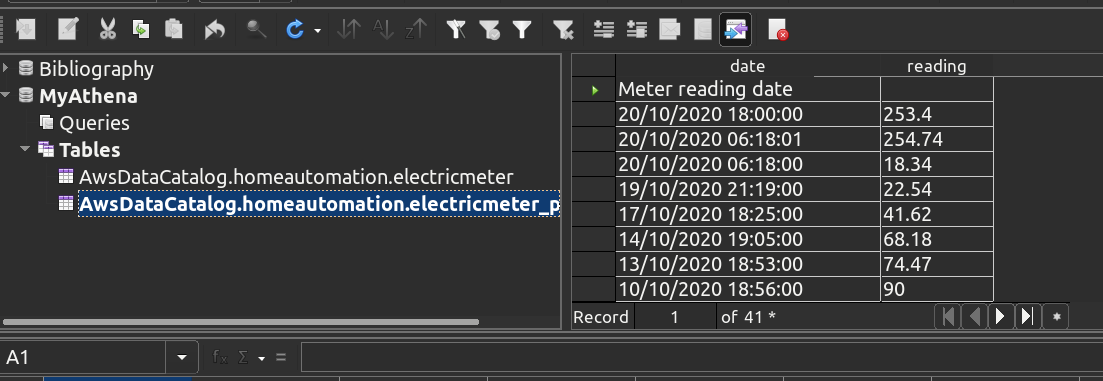

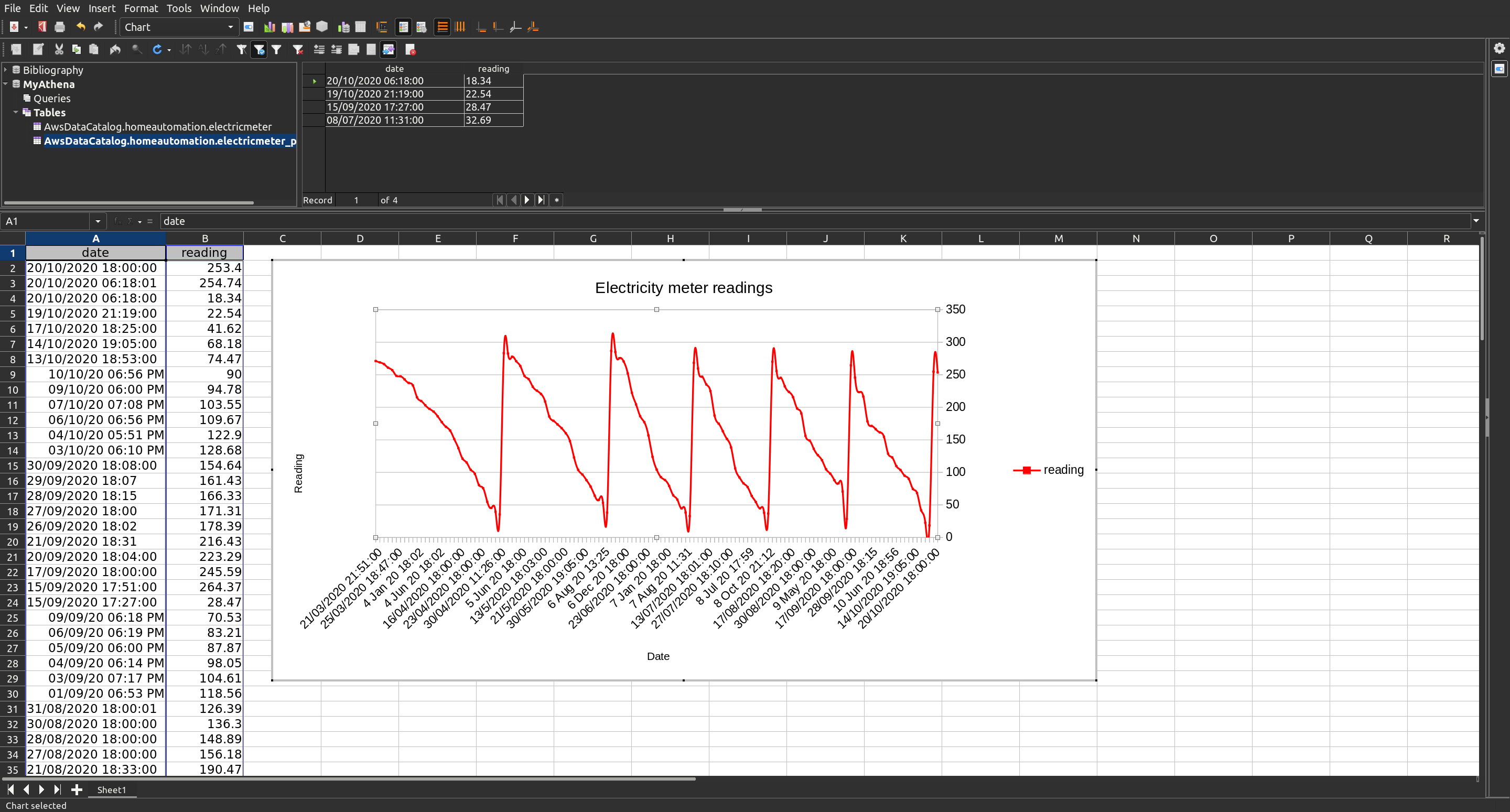

Open LibreOffice Calc and hit <Shift> + <Ctrl> + <F4> which will open the Data Sources window. You should see your database connection MyAthena as an option alongside the default one Bibliography.

Opening MyAthena will show the tables we have available to us.

Step 4: Graphing our data

Now let’s grab some of the data.

Last but not least: CloudTrail

It would be good to know how these queries look in AWS CloudTrail. If you’ve got access to CloudTrail, then

aws cloudtrail lookup-events --lookup-attributes AttributeKey=EventName,AttributeValue=StartQueryExecution|jq -M '.Events[0]|.CloudTrailEvent|fromjson'

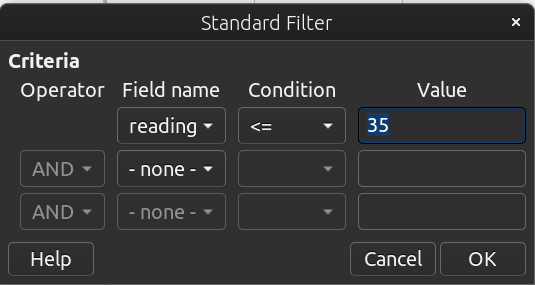

This should show output along these lines (here I’ve only taken the most recent Event for the StartQuertExecution API call, which selected records where the reading was less than 35)

{

"eventVersion": "1.05",

"userIdentity": {

"type": "IAMUser",

"principalId": "AIDAxxxxxxxxxxxxxxxJT",

"arn": "arn:aws:iam::xxxxxxxxxxxx:user/hamish",

"accountId": "xxxxxxxxxxxx",

"accessKeyId": "AKIAxxxxxxxxxxxxxWWT",

"userName": "hamish"

},

"eventTime": "2020-10-28T16:21:42Z",

"eventSource": "athena.amazonaws.com",

"eventName": "StartQueryExecution",

"awsRegion": "eu-west-1",

"sourceIPAddress": "xxx.xxx.xxx.xx",

"userAgent": "aws-sdk-cpp/1.7.365 Linux/5.4.0-7642-generic x86_64 GCC/4.9.2",

"requestParameters": {

"queryString": "SELECT * FROM \"AwsDataCatalog\".\"homeautomation\".\"electricmeter_parquet\" WHERE ( \"AwsDataCatalog\".\"homeautomation\".\"electricmeter_parquet\".\"reading\" <= 35 )",

"clientRequestToken": "AE646CD5-......-CE28",

"queryExecutionContext": {

"database": "homeautomation"

},

"resultConfiguration": {

"outputLocation": "s3://myS3-big-data-bucket/athena-queries/"

}

},

"responseElements": {

"queryExecutionId": "399xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx230"

},

"requestID": "2eaxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxf5b",

"eventID": "390xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxa4b",

"eventType": "AwsApiCall",

"recipientAccountId": "xxxxxxxxxxxx"

}

References

Disclaimers:

It should be noted that there may well be better ways of getting access to the S3 data than the way proposed in this blog; so certainly explore those. This was primarily aimed at highlighting that the data in the data lake should be accessible to as many as possible.

Last modified on 2020-10-28